Fact Finder

Top 20

This is genuinely a landmark article.Apologies if already posted

Infineon, BrainChip, Daimler, Volkswagen - High-tech stocks at their best!

The global automotive business is operating according to new laws. That is because e-mobility is a done deal, and if politicians' pronouncements are anything to go by, combustion engines will be history from 2030. As a result, the prerequisites for the industry are also changing because...news.financial

BRAINCHIP HOLDINGS - PARTNERSHIPS WITH EDGE IMPULSE AND ARM ANNOUNCED

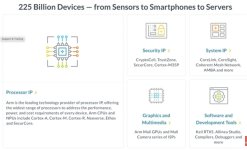

BrainChip Holdings is an Australian semiconductor company that manufactures a revolutionary neuromorphic processor called "Akida". The Akida processor can learn on its own while in use, as it improves the overall performance of artificial intelligence systems by reducing latency and power consumption. The new chip with AI capabilities represents a wide range of application areas, including control of autonomous vehicles, IoT devices and drones.

BrainChip recently announced it is entering into a new partnership with Edge Impulse to develop next-generation machine learning platforms. Both companies aim to make BrainChip's neuromorphic technology, based on Spiking Neural Networks, widely applicable. It will involve combining the two partners' existing technologies to realize shorter development cycles. It is also expected to accelerate time-to-market in the future to gain a competitive advantage. Edge Impulse is a leader in edge AI development, and together with BrainChip, they plan to develop smart devices with AI/ML capabilities.

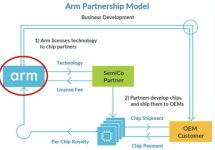

And another highlight: with recent news, the Australians have been accepted into ARM's AI Partner Program, one of the biggest names in the chip sector. The British manufacturer's blueprints are the dominant standard for smartphones, tablets and mobile IoT devices. With the inclusion in the AI Partner Program of the market giant ARM, BrainChip has again succeeded in a super deal. It will allow the chipmaker to significantly expand the commercial reach of its Akida processors in the future.

Technology company BrainChip Holdings has come under a lot of pressure since selling off on the NASDAQ. After rising to over EUR 1.60 in January, the share price fell back to EUR 0.65 by May, with a jump to EUR 0.88 at the beginning of the week. Should the correction on the NASDAQ be over, the BrainChip share will certainly be one of the high-flyers again.

In the bad old days Brainchip would occasionally get a paragraph if we were lucky in an after thought sort of way and Intel’s Loihi would flow through the whole piece.

No more Brainchip gets to feature in its own right then gets to pop up again in the segment on Mercedes v Volkswagen.

The progress being made by Brainchip is clear and Blind Freddie cannot understand how some sighted people cannot see the change that is sweeping across the globe.

My opinion only DYOR

FF

AKIDA BALLISTA